Multiple Processor: A multiple Processor is looked upon to improve computing speeds, act, and cost-effectiveness and provide enhanced availability and reliability. A multiprocessor is a computer system with two or more central processing units, with each one sharing the standard main memory and the peripherals.

This helps in the simultaneous processing of programs. The essential impartial of using a multiprocessor is to improve the system’s execution speed, with other purposes being fault tolerance and application matching. A good design of a multiprocessor is a single central tower devoted to two computer systems.

Table of Contents

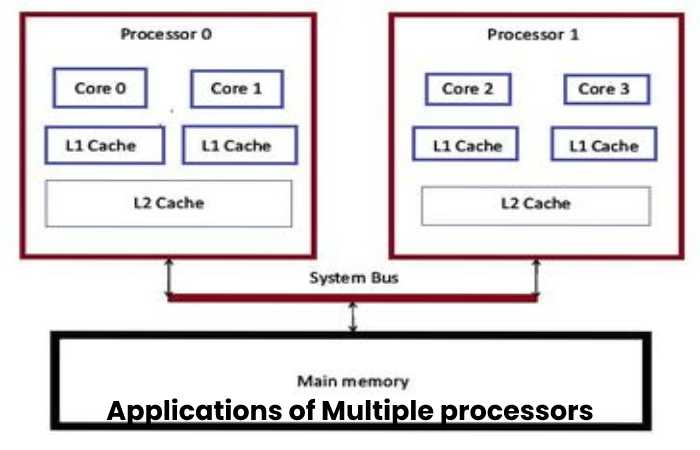

Applications of Multiple processors

- As a uniprocessor, such as single training, single data river (SISD).

- Multiple data streams are used for vector processing as multiprocessors, such as single instruction.

- Multiple series of instructions in a single outlook, such as various instruction single data streams (MISD), are used to describe hyper-threading or pipelined processors.

Inside a single system for executing multiple, separate series of instructions in various perspectives, such as various instructions, multiple data stream

Benefits of using a Multiple Processor

- Enhanced performance.

- Multiple applications.

- Multi-tasking inside an application.

- High throughput and responsiveness.

- Hardware sharing among CPUs.

Multicomputer

A multi-computer system is a computer structure with numerous connected processors to solve a problem. Each processor has its memory, and it is accessible by that particular processor, and those processors can communicate via an interconnection network.

As the multicomputer is capable of messages passing between the processors, it is imaginable to divide the task between the processors to the whole job. Hence, a multicomputer can be used for spread computing. It is cost-effective and more accessible to build a multicomputer than a multiprocessor.

Difference Between Multiple Processors and Multicomputer

Multiprocessor supports parallel computing, and Multicomputer supports distributed computing. A multiprocessor arrangement is a single computer that operates with multiple CPUs, whereas a multicomputer system is a cluster of computers that serve as a singular computer.

A multiprocessor is a system with two or more central processing units (CPUs) capable of performing multiple tasks. A multi-computer system has various processors attached via an interconnection network to perform a computation task. The construction of a multicomputer is more accessible and cost-effective than a multiprocessor. In a multiprocessor system, the program tends to be more accessible, whereas, in a multi-computer system, the program tends to be more difficult.

Uses of Multiple Processor

A multiprocessing system uses further than one processor to process any given job, growing the performance of a system’s application environment beyond a single processor’s capability. This permits tuning the server network’s performance to yield the required functionality.

As described in “Server Availability,” this feature is scalability and is the most critical aspect of multiprocessing system architectures. The scalable system architecture allows network administrators to tune a server network’s act based on the number of processing nodes compulsory.

The most common multiprocessor architecture has been collections of processors decided in a loosely coupled shape and interacting over a communication channel. This messaging channel might not necessarily consist of a conventional serial or parallel arrangement.

In its place, it can be composed of shared memory, used by processors on the same board, or even over a backplane. These cooperating processors operate as independent nodes, using their memory subsystems.

The Most Common Use of Multiple Processor

Recently, the implanted server board space has been arranged to accommodate tightly coupled processors, either as a pair or a selection. These processors share a joint bus and addressable recollection space. A switch attaches them, and interposers communication accomplish through message passing.

The processors operate as a single node in the overall system configuration and appear as a single processing element. Additional loosely coupled processing nodes increase the general processing power of the system. When more tightly coupled processors add, the available processing control of a single node increases.

Pentium and Xeon processors remain currently named distinctly. These processors have suffered many stages of refinement over the years, for example, the Xeon processors are designed for either network servers or high-end workstations.

Similarly, Pentium 4 microprocessors were the future solely for desktop placement, although Xeon chips had also called “Pentiums” to denote their family origin. The Xeon family entails two main branches: the Xeon dual-processor (DP) chip and the Xeon multiprocessor.

Multiple Processing Features

Dual-processor systems design exclusively with motherboards fitted with either one or two sockets. Multiprocessor arrangements usually have room on the board for four or more processors, although no most minor requirement exists. Xeon MPs designs for dual-processor surroundings due to specific features of their architecture, which are more luxurious.

Multiprocessors are design to work compose in handling large databases and commercial contacts. Dual processors develop for higher clock speeds than multiprocessors, creating them more effective at controlling exact high-speed additions.

When several multiprocessors work as a group, they outperform their DP cousins even at slower clock speeds. Although the NOS can run multiprocessor systems using Symmetrical Multiprocessing (SMP), it must arrange. Simply adding another processor to the motherboard deprived of adequately configuring the NOS may result in the system ignoring the additional processor altogether.

Types of Multiple Processor

- Various categories of multiprocessing systems recognize. They include

- Shared nothing

- Shoe disks

- The memory cluster

- This memory

In shared-nothing MP systems, each processor is a complete standalone machine, running its copy of the OS. The computers do not share memory, caches, or recordings but connect loosely through a LAN. Though such systems enjoy the advantages of good scalability and high availability, they have the disadvantage of using unusual message-passing programming ideally.

Shared disk MP scheme processors also have their memory and cache, but they do run in parallel and can share disks. They loosely couple through a LAN, each running a copy of the OS. Again, communication between processors through message passing.

The advantages of standard disks are that disk data is addressable and coherent, whereas high availability obtains more than in shared-memory systems. The disadvantage is that only incomplete scalability is possible due to physical and logical access bottlenecks to shared data.

All processors have their memory, disks, and I/O resources in a shared memory cluster, while each processor runs a copy of the OS. However, the processors coupled through a switch and communications processors accomplish this through the shared memory.

All the processors couple through a high-speed bus (or switch) on the same motherboard in a strictly shared memory arrangement. The part has the same total memory, disks, and I/O devices. Since the design to exploit this style, only one copy runs across all processors, making this a multithreaded memory shape.

SMP Commercial Advantages in Multiple Processor

- The commercial advantages of the use of regular multiprocessing include

- Increased scalability to support new network services short of the essential for main arrangement upgrades

- Support for better arrangement of thickness

- Increased processing control minus the incremental costs of provision chips, chassis slots, or upgraded peripherals

- True concurrency with simultaneous execution of multiple requests and system services

- For network operating organizations running many different processes using similar SMP technology. This includes multithreaded server systems that pack area networking or online transaction giving out.

Multiple Processing Disadvantages

Multiprocessing systems deal with four problem types connected with control processes or transmitting message packets to match events between computers. These types are

Overhead—The time wasted in achieving the required public services and control status before truly beginning the client’s processing request

Latency—The time delay between starting a control command or sending the knowledge message and when the processors receive it and begin initiating the appropriate actions

Determinism—The degree to which the processing events precisely execute

Skew—A measurement of how far apart actions occur in different processors when they should occur simultaneously

The various ways these problems arise in multiprocessing systems become more understandable when considering how a simple message interpret within such architecture. Suppose a message-passing protocol sends packets from one of the processors to the data chain linking the others.

Each processor must analyze the message header and pass it along if the packet intends. Determinism adversely impacts when additional processors add to the system chain (scaling out). Plainly, latency and skew will grow with each pause for interpretation and retransmission of the packet.

A custom hardware implementation requires circuit boards designed for multiprocessing systems whereby a dedicated bus, apart from a general-purpose data bus, is provided for command-and-control functions. In this way, determinism maintains regardless of the scaling size.

Conclusion

Another common but avoidable problem is multiprocessor server boards when processors install that design to use different clocking speeds. To expect multiprocessors to work correctly together, they should have equal speed ratings.

Although it’s likely that, in some cases, a faster processor clocks down to work in the system, problems commonly happening between mismatched processors will cause operational glitches beyond the open-mindedness of attendant networks. The safest approach with multiprocessor server panels is to install processors that their manufacturer guarantees to work together. These will match according to their family, model, and stepping versions.